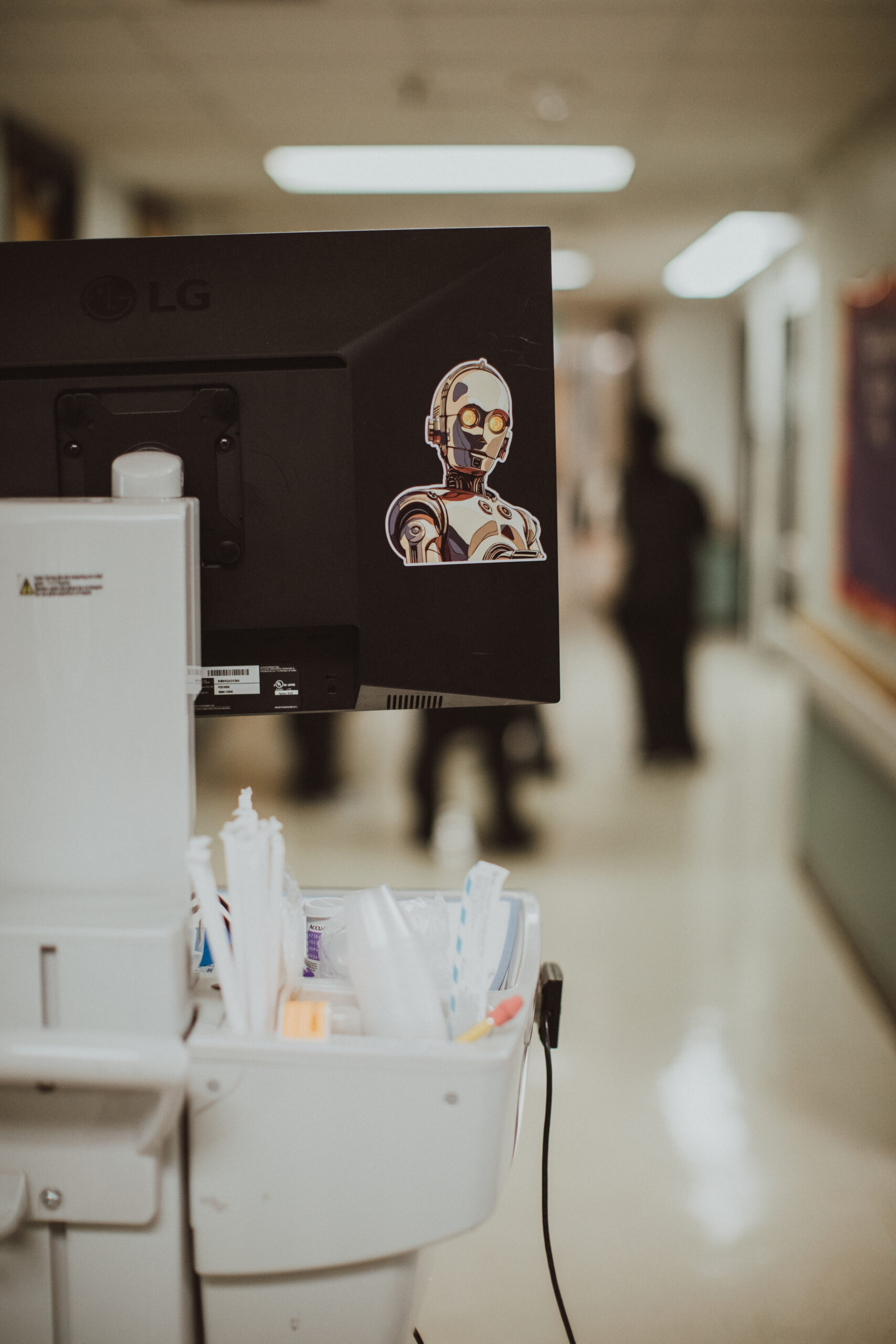

If you trust the titans of industry, and I do not, we are led to believe that, just around the proverbial corner, the equivalent of C-3PO will arrive to improve health care. How wonderful!

You might remember the fussy, shiny droid, the golden companion of R2-D2 in the Star Wars films. Fluent in over six million forms of communication, C-3PO bubbled with personality, but it was his “desire” to do the right thing that made him special. As a fellow rule-follower, I was charmed by this robot in the 1980s. Could the future bring us such a good-intentioned invention?

Lately, so-called artificial intelligence (AI) is in the front of my mind with nonstop advertisements telling me just how great this development is going to make our lives. Is it? AI companies act as if computer algorithms, ones that require massive amounts of electricity and biblical volumes of water, are going to make the human aspects of health care better. Or, maybe make them obsolete. Will each of us have our very own C-3PO for health care?

The power of organic intelligence

The fundamental core of health care is human emotion and, the way I see it, this is the most precious substance in the galaxy. This organic intelligence (OI) is powered by neurons, ATP, thoughts, feelings, memories, and, in my case, caffeine. Our ancestors harnessed OI and created human-human relationships to survive on a cold, cruel planet. Who the hell knows how they actually did this, but we have remained alive gazing up at the Milky Way for millennia.

This fact is so obvious and fundamental to our success as a species, and yet, much of the IT industry gazes past the person, the patient, narrating a story of illness and a human, the practitioner, thoughtfully listening. This organic dyad has persisted intact even as we discovered microbes, anesthesia, antibiotics, and radiation as both injury and therapy.

As long as humans have been breathing, one suffering person has turned to another, and we cannot be bamboozled by computer companies telling us that their machines can, or could, do this better. Our OI dyad promotes healing, community, and alleviating suffering. It is an imperfect relationship and things can, and often do, go wrong.

In my career, people have confessed to me an ocean of pain, spilling waves of regret, mistakes, transgressions, lies, dreams, hallucinations, in addition to simpler things like blood pressure, diabetes, and asthma. They sought my counsel, the one that radiates from my humanity, my ability to process verbal communication, read nonverbal clues, and triangulate the arc of their lives, placing it all within their particular values systems.

Corporate data and privacy concerns

As a medical doctor with receding hair and bifocals, AI infiltration into health care has me very worried. Operating under the mistaken notion that AI is going to be a benevolent droid, we are volunteering our worries, medications, menstrual cycles, lab results, biopsies, mental health problems, and family cancer histories into machines that, ultimately, are owned by corporations.

Let us forget for a moment that this information, much of it personal, is being collected and stored by Amazon, Google, Facebook, Microsoft, Apple, Starlink, OpenAI, and others. These companies do not exist to screen the population for cancer. Their mission is not to educate the public about their health. They are not currently fighting disinformation and promoting social harmony. They are not, actually, supporting your mental health. Do you honestly believe that ChatGPT has the capacity to “care” for you?

“OpenAI says more than 230 million users already ask ChatGPT health and wellness questions every week,” The Washington Post reported in January 2026. ChatGPT, obviously, is not a “health care provider” and, thus, is not subject to federal health privacy protections under the Health Insurance Portability and Accountability Act of 1996, most commonly known as HIPAA.

Recently, Mehmet Oz, the administrator of the Centers for Medicare & Medicaid Services, suggested that AI avatars will be essential to meeting the needs of rural communities like West Virginia where I live. “There is no question about it; whether you want it or not the best way to help some of these communities is going to be AI-based avatars,” Oz said recently at an event hosted by Action for Progress. He did not stop there, saying, “We can use robots to do ultrasounds on pregnant women,” a statement that performs a shotgun wedding of my fears of technology and historic inequities in Appalachia.

In the past 20 years, has Facebook improved your health? If you believe that it has, ask yourself how much you trust this entity. And while Amazon is spectacularly good at delivering dog food and Christmas presents, do you want them to know all your medications and doctors’ names? This is to say nothing of upstart AI entities in far-flung parts of the globe, organizations that strike me as being as friendly as the Death Star.

The limitations of large language models

Large language models (LLMs) are the foundation of AI, harnessing the power of data sets to perform a neat parlor trick: predicting the most likely next word. This is how these machines “write” text that is, let us say, human-like. It is not, actually, writing and LLMs treat every word the same, merely noting how and when they are, statistically, used in many situations. How is this a problem?

Artificial “intelligence” is fundamentally limited, unable to predict the next words that someone will say when they are talking about their dying mother. The same is true when narrating a story about their postpartum depression. Or sharing details about their drug use. Or talking about, for the first time ever, being raped. The narrators of these painful stories do not even know themselves what words come next as they try, in the presence of a kind human, to make meaning of their experience. And this meaning-making comes precisely from the words. It is all unpredictable and messy, the vocabulary at the heart of life’s chaos and beauty.

This is the opposite of algorithms: This is humanity. It is organic and imperfect, but it often comes with a warm embrace and “we will figure this out together.” Do you believe AI apps like Grok, already famous for undressing women and children, will do this for you? Does “Dr.” Mehmet Oz actually believe that blue-collar Americans are going to trust computers with their health care? Might I remind him that these same citizens, my beloved people, struggled to trust messengers like myself in the maelstrom of COVID-19. Talk to an LED screen about your diabetes or drinking problem; give me a break.

I have no idea what I am going to say the next time I listen to a patient’s story. An AI avatar will always have a preformed response, but I do not have to. And I do not want to. With great faith in the organic intelligence dyad that has kept us alive, I know that my heart and my internist brain, provided I have had my coffee, will do their best to find the right words. And even if they are not the “right” or the “best” words, I know that they will be caring and kind, possessing a warmth that computers and droids, even the C-3POs of the galaxy, can never feel. And this, I am sure in my bones, is how we will survive.

This article is part of an ongoing series featuring the photography of Molly Humphreys. Find hundreds of additional photos by visiting Healthcare is Human social media and podcast.

Ryan McCarthy is an internal medicine physician.

![I Googled my own name and a corporate clinic I've never worked at appeared [PODCAST]](https://kevinmd.com/wp-content/uploads/vertical_integration_thumbnail-190x100.png)

![Clinicians are failing at value-based care because no one taught them the system [PODCAST]](https://kevinmd.com/wp-content/uploads/bd31ce43-6fb7-4665-a30e-ee0a6b592f4c-190x100.jpeg)

![Patients don’t need certainty, they need your reasoning out loud [PODCAST]](https://kevinmd.com/wp-content/uploads/faron_thumbnail-190x100.png)