Diagnostic radiology, as a physician-staffed specialty, will not exist in its current form within 20 years. Neither will diagnostic pathology. Neither, in all likelihood, will the outpatient model of endocrinology or general internal medicine as we currently understand it. These are not fringe predictions from technologists who have never set foot in a hospital; they are the logical endpoint of capability curves that are already clearly in motion, applied to clinical workflows that are, at their core, pattern recognition problems dressed in white coats.

I know that will make a lot of my colleagues uncomfortable. I get it. But I would argue the real problem is not the prediction; it is that we keep avoiding the conversation. After 20 years practicing emergency medicine, I am convinced the reorganization of medicine by artificial intelligence is not some far-off disruption. It is already underway, it will be highly uneven across specialties, and its internal logic is more predictable than most physicians want to admit. Understanding that logic, tier by tier, specialty by specialty, is quickly becoming a professional obligation, not just an intellectual exercise.

The framework: What does the specialty actually do?

The key to predicting artificial intelligence displacement is not specialty prestige, compensation, or training length. It is the nature of the cognitive task being performed. I propose a rough taxonomy.

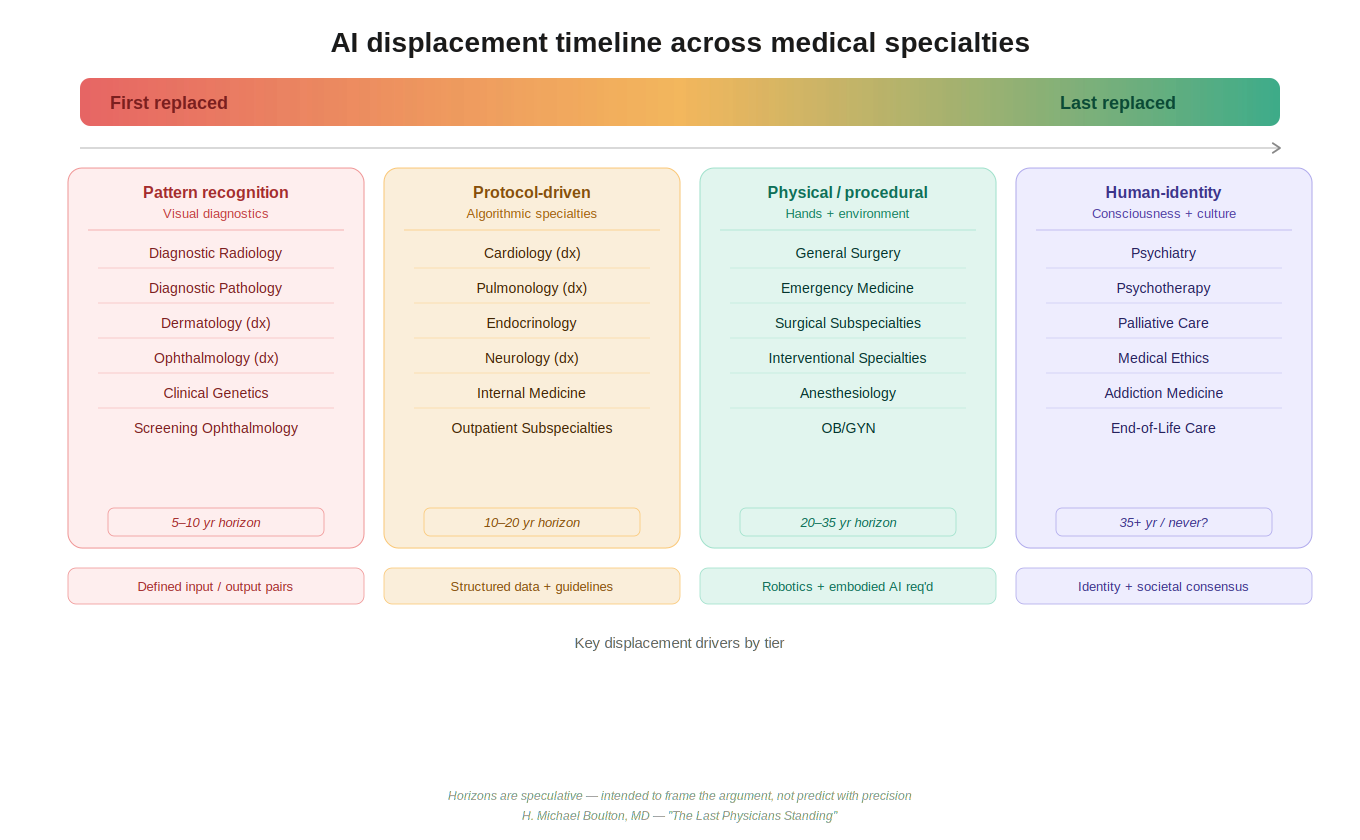

- Tier 1 comprises the pattern recognition specialties: diagnostic radiology, pathology, dermatology, screening ophthalmology, clinical genetics, where a bounded, high-resolution input is processed to produce a categorical output. This is structurally exactly what deep learning neural networks were built to do.

- Tier 2 covers the protocol-guided specialties: cardiology, endocrinology, pulmonology, outpatient internal medicine, where lab values, imaging data, and clinical history are applied against evidence-based algorithms.

- Tier 3 is the domain of physical intervention in dynamic environments: surgery, emergency medicine, anesthesiology, obstetrics, and the interventional specialties, work that requires dexterous, embodied action in a biological environment that is perpetually surprising.

- Tier 4 is what I would call the human-identity specialties: psychiatry, palliative care, addiction medicine, psychotherapy, domains that engage not merely with disease in a body but with a person’s constructed sense of self, their cultural framework, their grief, their relationships, their will to live.

Each tier faces a different threat profile, a different augmentation opportunity, and a fundamentally different long-term trajectory.

Pattern recognition will fall first, but not before it expands access

Let us be direct: Diagnostic radiology, as currently practiced, is in genuine existential danger. The performance of artificial intelligence systems on visual diagnostic tasks is not marginal; it is frequently superior. Food and Drug Administration-cleared artificial intelligence tools already flag diabetic retinopathy, pulmonary nodules, intracranial hemorrhage, and breast cancer on screening mammography with sensitivity and specificity that matches or exceeds fellowship-trained subspecialists, operating continuously, without fatigue, without the anchoring bias that plagues experienced readers after a long read session.

But before displacement arrives in full, there is a transitional phase worth examining on its own terms, and it is one with genuine benefits for patients. In the near term, artificial intelligence augmentation dramatically expands what a single radiologist can accomplish. A physician who previously reviewed 200 studies in a shift, manually prioritizing by the timestamp on the queue, can with artificial intelligence assistance triage the entire queue by acuity in real time, focus cognitive effort on the highest-yield and most ambiguous cases, and dramatically reduce turnaround time for routine studies.

The same logic applies across Tier 1: A dermatologist equipped with artificial intelligence-assisted tele-dermatology tools can extend their diagnostic reach to rural and underserved populations who currently wait months for specialist access. A pathologist with artificial intelligence flagging probable malignancies on whole-slide images can review more cases with greater confidence and reserve their expertise for the genuinely difficult. This augmentation phase is not merely a consolation prize before replacement. For a period of years, perhaps a decade or more in some settings, artificial intelligence-physician collaboration in Tier 1 specialties will increase access, reduce error rates, and stretch a chronically undersupplied specialist workforce further than was previously possible. It is a meaningful interim benefit and deserves recognition as such.

The argument that artificial intelligence will augment rather than replace radiologists has merit in the near term but becomes less persuasive with each passing year. The augmentation argument assumes that the physician’s role is to catch what the artificial intelligence misses. But as deep learning architectures continue to mature, and particularly as next-generation reasoning models move beyond pattern matching toward genuine inferential chains over complex multimodal data, the calculus inverts: The physician’s review risks becoming the source of error rather than the safety net. The architectural leap from narrow image classifiers to large multimodal reasoning models capable of integrating imaging data, clinical history, laboratory values, and genomic information simultaneously represent a qualitative shift, not merely a quantitative one. When that integration matures, the case for maintaining a human in the diagnostic loop for routine studies becomes very difficult to make. The timeline for functional artificial intelligence parity in Tier 1 roles: five to 10 years. Structural workforce displacement, the reduction in residency slots, the reorganization of billing models, will lag by a decade, but it will follow.

The algorithmic specialists face a slower but similar arc

Tier 2 specialties occupy a middle position. They are not as immediately vulnerable as the purely visual diagnostic fields, but they are more structurally exposed than they may appear. The practice of outpatient cardiology, endocrinology, and general internal medicine is, at its core, the application of evidence-based guidelines to structured inputs: laboratories, imaging reports, vital signs, medication lists, and patient-reported symptoms. Artificial intelligence systems integrated into the electronic health record are already performing guideline-concordance checking, risk stratification, and diagnostic suggestion with accuracy that challenges the median practicing physician. What they cannot yet do consistently is integrate the full texture of a clinical encounter: the subtle affect of a patient who is not adherent to their medications, the context of a difficult home situation that makes the textbook treatment plan unworkable, the gestalt of someone who looks sicker than their numbers suggest.

In the near term, artificial intelligence augmentation in Tier 2 will function similarly to Tier 1: It will amplify the reach of a single specialist. An endocrinologist managing a panel of diabetic patients can use artificial intelligence-assisted monitoring to flag early deterioration, adjust insulin protocols continuously between visits, and focus in-person encounter time on patients whose complexity genuinely requires human judgment. The practical result is increased panel sizes, reduced wait times, and better management of chronic disease at the population level, all meaningful gains for a health care system strained by specialist shortages.

The longer-term trajectory, however, points toward absorption rather than mere augmentation. As large language models with deep reasoning capabilities become embedded in clinical workflows, the question shifts from whether artificial intelligence can assist a Tier 2 specialist to whether the specialist’s role has been reduced to supervising artificial intelligence outputs and signing orders. That supervisory role is real and not trivial in the near term, but it is also a role that, with adequate regulatory adaptation and liability restructuring, need not require 12 years of post-baccalaureate training.

The honest projection is that deep thinking neural networks will, within 20 years, be capable of managing the majority of stable, protocol-amenable Tier 2 cases with minimal human oversight. What persists is the management of complexity, exception, and human context, and the question of how much of a specialty’s caseload those categories represent is one the profession should be asking now, not after the transition is already underway.

Hands and chaos: Why emergency medicine and surgery will be the last technical holdouts

Emergency medicine sits in a structurally protected position, though not an invulnerable one, and we should be honest about both. What protects emergency medicine from rapid artificial intelligence displacement is not intelligence complexity alone; it is environmental chaos and physical embodiment. Consider a single shift. In the span of four hours, I may manage a ST-elevation myocardial infarction, intubate a patient with a difficult airway anatomy I have never seen catalogued in any textbook, navigate a conversation with a family who has conflicting views on resuscitation, place a central line in a patient with no visible anatomy, improvise a treatment plan when the formulary is incomplete, and make a disposition decision for a patient who has no reliable historian, no records, and no clear diagnosis.

None of these tasks map cleanly onto current artificial intelligence capabilities. Natural language models handle the informational aspects well: differential generation, drug dosing, protocol retrieval. But the physical examination finding that does not fit, the look in a patient’s eyes that suggests they are sicker than their vitals imply, the procedural improvisation required when the first three approaches fail, these remain irreducibly human, at least for now.

Here too, there is a meaningful augmentation phase before any displacement becomes realistic. Emergency medicine is already using artificial intelligence tools for sepsis early warning, stroke and large vessel occlusion identification on computed tomography, and risk stratification for chest pain. These tools increase the cognitive bandwidth of the emergency physician, allowing earlier identification of deteriorating patients and more efficient allocation of workup resources. In an environment where the average emergency physician manages 15 or more patients simultaneously, artificial intelligence-assisted surveillance of the entire department in real time represents a genuinely transformative capability, not a replacement, but a force multiplier that extends the physician’s reach across a panel of patients that would otherwise exceed safe human attention.

Surgery is even more protected in the near term. A robotic surgical system like the da Vinci assists a surgeon but does not replace one. The precision of robotic surgical assistance is extraordinary; the judgment required to decide what to do when you open an abdomen and find something unexpected remains the province of the trained human surgeon.

Emergency medicine and the surgical/intervention specialties are last to be displaced, not never displaced. Advanced deep thinking artificial intelligence architectures, when integrated with next-generation robotics and real-time environmental sensing, will eventually reduce even this barrier. A fully autonomous surgical robot operating in a controlled elective environment is plausible within 30 years. Fully autonomous management of an undifferentiated emergency department patient is a harder problem; the environmental variance alone may require artificial general intelligence rather than narrow artificial intelligence to solve, but the protection this complexity provides is a matter of decades, not permanence. The profession should be defining what aspects of this work are irreducibly human before that question is decided for us.

Psychiatry is different in kind, not just degree, and that may be permanent

Every specialty described so far is resistant to artificial intelligence displacement on technical grounds, grounds that advanced artificial intelligence systems will eventually erode, whether in five years or 50. Psychiatry, and the cluster of specialties grouped with it, is resistant on philosophical and cultural grounds that may prove more durable than any technical barrier.

Before examining why, it is worth acknowledging an important demographic reality: The specialties in this tier are, by nature, low-volume in the lives of most people. The majority of individuals will never require inpatient psychiatric care. Most will never struggle with addiction in the clinical sense or require formal addiction medicine management. Psychotherapy, while more broadly utilized, remains the province of a minority of patients in any given year. And palliative care, by its very design, is typically engaged only at the end of life, a single, irreplaceable passage that most people will experience exactly once, in their most vulnerable hour.

This demographic reality does not diminish the importance of Tier 4; it amplifies it. These are the encounters that occur at the most vulnerable, most definitional moments of a human life. The very infrequency with which most people encounter these specialties makes the quality of those encounters matter more, not less. A patient sitting across from a psychiatrist on the day they disclose suicidal ideation for the first time, a family gathered around a palliative care physician as they navigate the transition to comfort measures, an individual in early recovery from opioid addiction sitting with a specialist who may themselves carry the weight of lived experience, these are not routine transactions. They are singular, high-stakes, identity-shaping moments. And across cultures, across generations, and with remarkable consistency, people want a human being present for them.

Even people who will never personally use these specialties understand intuitively that they exist, that they are staffed by humans, and that the option of human engagement is available if they ever need it. The social contract around Tier 4 is not about frequency of use. It is about what kind of world we want to inhabit: one in which the most vulnerable moments of human life are witnessed and held by other human beings, not processed by algorithms.

Consider what psychiatry actually does at its core. A psychiatrist treating a patient with major depressive disorder, borderline personality, or schizophrenia is not merely diagnosing and prescribing. They are sitting with a person in their suffering. They are witnessing. They are holding a therapeutic relationship that, according to decades of outcome research, is itself the active ingredient, independent of the specific modality used. The alliance is the treatment.

Can an artificial intelligence form a therapeutic alliance? This is not merely a technical question; it is a deeply philosophical one touching on consciousness, empathy, authenticity, and what we mean when we say we feel understood. An artificial intelligence can simulate empathic responses. It can reflect language skillfully. It can provide cognitive-behavioral techniques with fidelity that equals or exceeds a trainee therapist. There are already peer-reviewed studies showing that patients disclose more to artificial intelligence systems than to human clinicians, presumably because judgment feels absent. And yet. Psychiatry treats the self. It treats identity, meaning, the narrative arc of a human life. When we consider allowing an artificial intelligence to restructure a person’s relationship to their own consciousness, to adjudicate what is pathological about their mind, to prescribe substances that alter their personhood, we are crossing a threshold that most cultures will resist with a force that no technological demonstration of superiority can easily overcome.

This resistance is not irrational. It reflects something real about the human need to have our inner life witnessed by another inner life. As a clinician, I observe that even patients who distrust physicians, who have been failed by the medical system repeatedly, who have excellent insight into their own illness, these patients want a human psychiatrist. Not because they believe the human is more accurate, but because the relationship itself is part of what they are healing toward.

Psychiatry also carries a uniquely fraught history: coercion, institutionalization, the medicalization of social deviance, that makes society rightly cautious about delegating its practice to non-human agents. Who is accountable when an artificial intelligence’s therapeutic intervention contributes to a patient’s suicide? Who holds the power in a relationship where one party is a corporation’s algorithm? These questions do not have clean answers, and their absence will slow displacement regardless of technical progress. My prediction: Psychiatry will be the last medical specialty to be functionally replaced by artificial intelligence, and there is a non-trivial probability that it is never fully replaced in the way that radiology will be. The societal and philosophical resistance is not a bug in the system; it may be the correct answer.

What comes next for the profession and for patients

The near-term story is not one of replacement but of amplification: artificial intelligence processes enabling physicians in every tier to extend their reach, serve more patients, and apply their expertise where it matters most. Health systems, payers, and physician organizations should be designing policies that capture these gains, particularly in underserved areas where specialist access is most constrained, rather than resisting artificial intelligence integration until disruption forces the issue.

The longer-term story is more disruptive. As deep thinking neural networks mature from narrow classifiers into genuinely reasoning systems capable of integrating multimodal clinical data, the technical basis for physician involvement in Tier 1 and eventually Tier 2 will erode. Embodied artificial intelligence and advanced robotics will eventually challenge Tier 3. The timelines are long, the path is nonlinear, and the regulatory and liability frameworks will shape the pace as much as the technology itself.

Training a generation of radiologists in the traditional model, without honest acknowledgment of what is coming, is a disservice to those trainees. The same conversation is overdue in pathology, in diagnostic dermatology, and increasingly in the outpatient medical subspecialties. Procedural training and physical competence, by contrast, remain the most durable investment a physician can make in their own career, not permanently, but meaningfully, on a 20-year horizon.

And then there is Tier 4. The mental health crisis is deepening globally. The one specialty most resistant to artificial intelligence displacement, and most required at the most critical, most human moments of a person’s life, is also the one most chronically undersupplied with practitioners. That problem will not be solved by artificial intelligence, and it will not wait for the profession to finish its internal debate about whether artificial intelligence is friend or threat. It requires investment in human psychiatric training, human addiction medicine capacity, and human palliative care infrastructure. The urgency is now.

The physicians who will thrive in this transition are not those who deny what is coming, nor those who surrender to it, but those who understand its specific contours clearly enough to position themselves, and their patients, wisely within it. The reorganization of medicine by artificial intelligence is not a single event. It is a decades-long process with a predictable internal logic. The question is whether the profession engages that logic on its own terms, or waits to be reorganized by forces it refused to understand.

H. Michael Boulton is an emergency physician.

![I Googled my own name and a corporate clinic I've never worked at appeared [PODCAST]](https://kevinmd.com/wp-content/uploads/vertical_integration_thumbnail-190x100.png)

![Clinicians are failing at value-based care because no one taught them the system [PODCAST]](https://kevinmd.com/wp-content/uploads/bd31ce43-6fb7-4665-a30e-ee0a6b592f4c-190x100.jpeg)